I am no expert in snow hydrology but I do recognize the importance of being able to estimate the amount of water captured in a snow pack and to be able to forecast snow melt rates. The University of Washington is actively researching this. They have enlisted the help of volunteers through the Zooniverse crowd sourcing platform. In the past, I have partaken in the Zooniverse hosted Operation War Diary which applies the same principle: recruit the help of very many volunteer non-experts to help execute tasks that cannot possibly be done by researchers alone.

From the Snow Spotter web page:

We have placed time-lapse cameras throughout Olympic National Park in order to better understand how much snow is in forests.

Watersheds can be dominated by forests and snow provides a natural storage of fresh water. Forests can intercept up to 60% of the total annual snowfall and 25-45% of the intercepted snow can be lost from the watershed back to the atmosphere through sublimation. Forest interception thus plays a vital role in our understanding of how much snow is in forests.

The photography will give us a qualitative rank/order description of how much snow is in the trees and inform us on how long the snow lasts in the trees. Through your help we hope to better understand forest snow interception and ultimately how much fresh water storage we have to last us through the dry season.

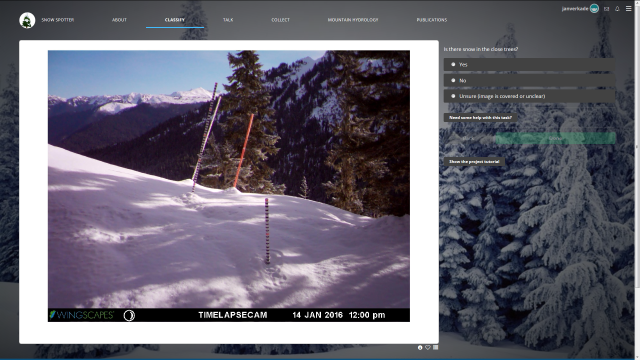

When helping to classify, you will be directed to different images and be prompted to rank the amount of snow in the tree. Don’t be worried if some of the images are fringe cases where you cannot decide how to rank it, just make your best guess!

So, if you have a bit of time on your hands, please condider participating in Snow Spotter. It’ll be fun in the process you may learn a thing or two!

This is how you’d classify a snow pack within Snow Spotter.